Using Proxies for Web Scraping: A Practical Guide

Web scraping at scale requires proxies to avoid IP bans, maintain anonymity, and collect data reliably across millions of requests. Here's how to build it right.

Web scraping at scale requires proxies to avoid IP bans, maintain anonymity, and collect data reliably across millions of requests. Here's how to build it right.

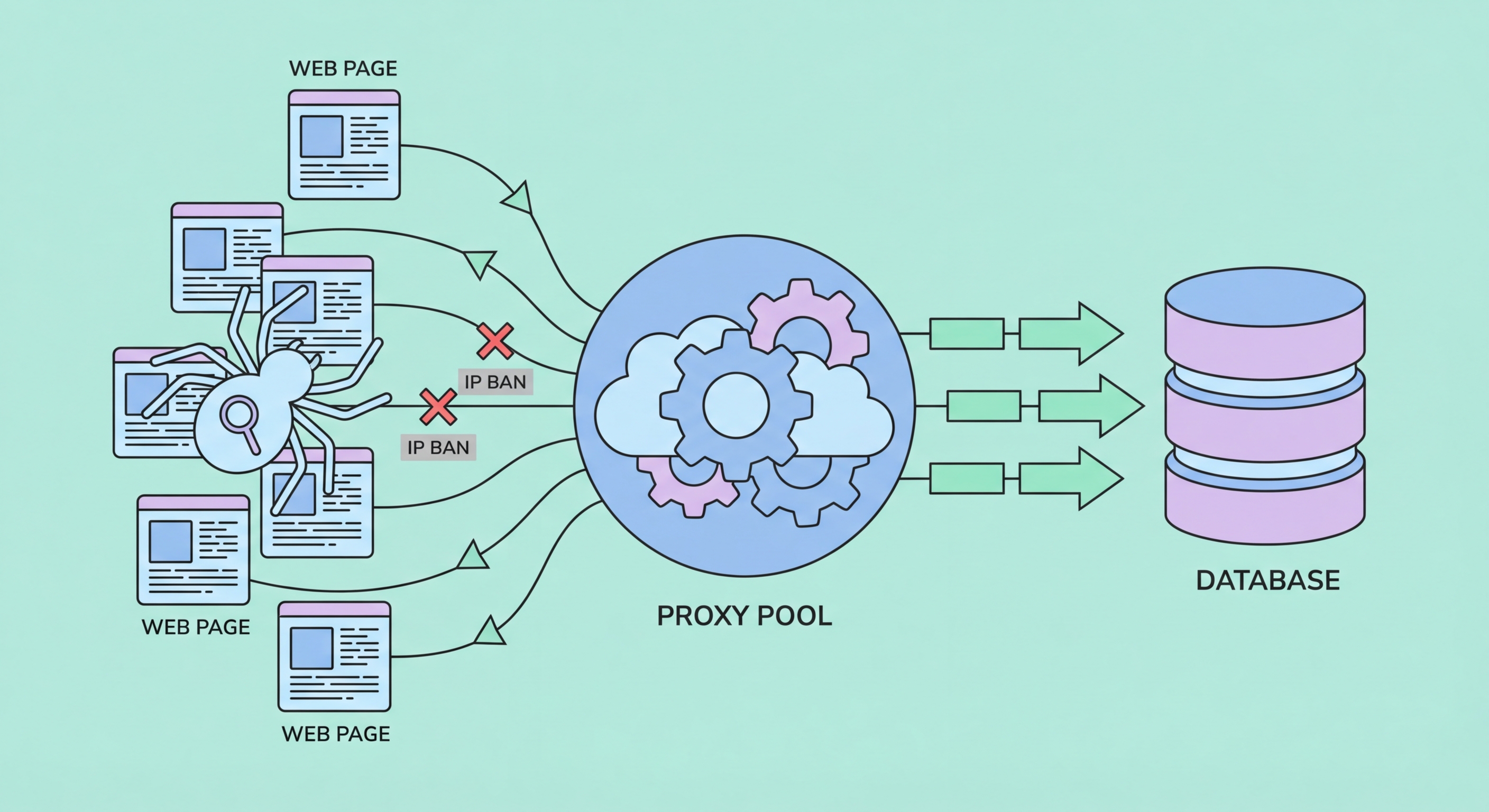

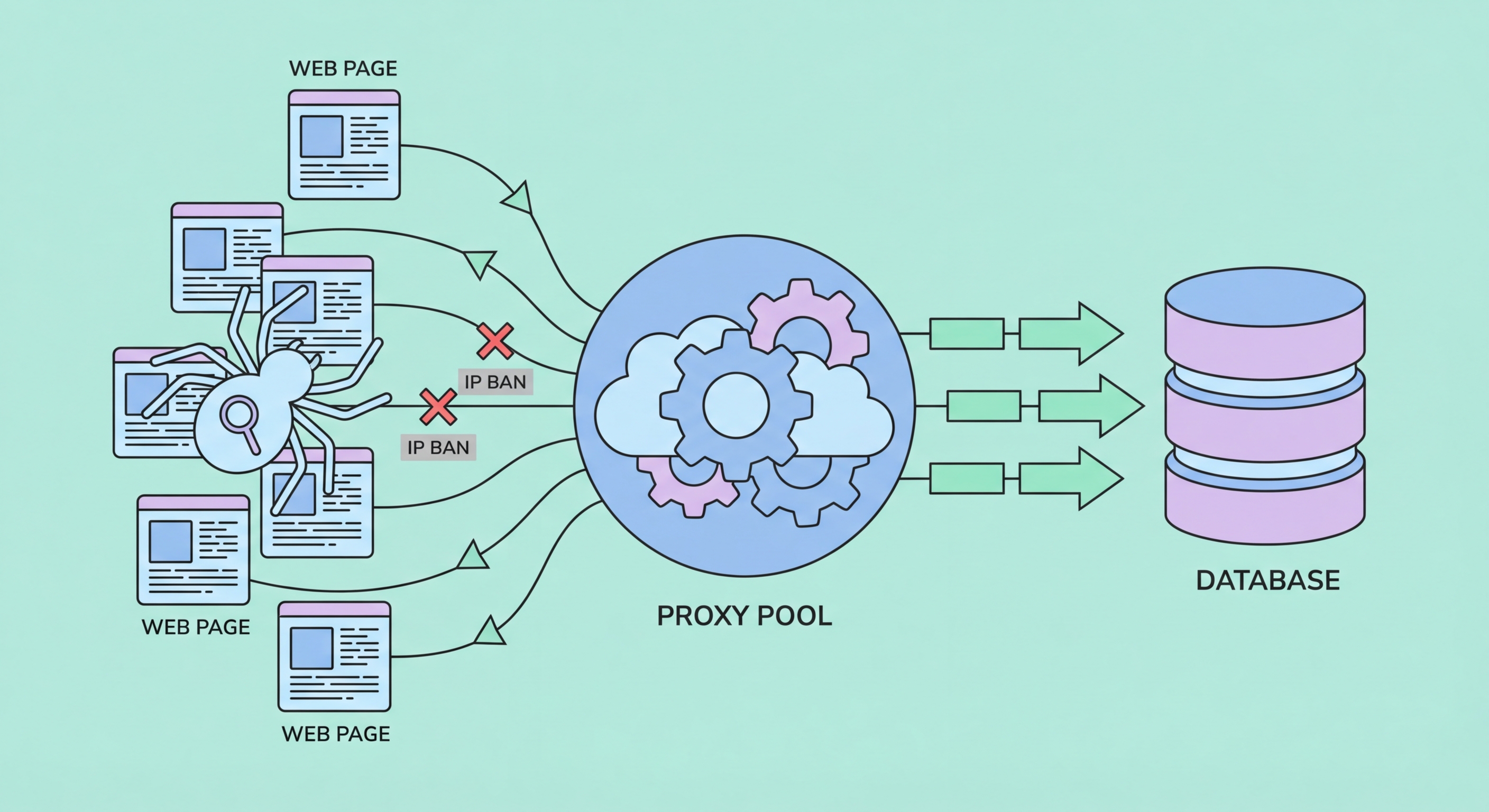

Web scraping — the automated collection of publicly available data from websites — becomes technically challenging at scale because websites deploy measures to detect and block automated traffic. The most fundamental defense is IP-based rate limiting: track how many requests come from each IP address, and block any IP that exceeds a threshold. For a scraping job that needs to collect 1 million product listings from an e-commerce site, a single IP address would be blocked after a few hundred requests at most, making the job impossible without proxy infrastructure.

Without proxies, a scraper is fully identifiable: the target website sees all requests coming from the same IP, which belongs to the scraper operator's ISP, cloud provider, or VPN. With proxies, requests are distributed across many different IP addresses, making the traffic pattern indistinguishable from many independent users visiting the same website concurrently — which is exactly what happens on any popular site's servers in normal operation. The scraper's true origin IP never contacts the target site directly.

Proxies also enable geographic data collection that would otherwise be impossible. E-commerce platforms frequently show different prices to users from different countries, serving prices in local currencies with local tax treatment. Search engines personalise results based on the user's geographic location, so checking search rankings from different cities requires using IPs genuinely located in those cities. Without residential proxies with genuine local IP addresses, the data collected would be the platform's default international view rather than the geographically accurate local experience.

A production-grade web scraping stack involves several components working together: a request scheduler, a proxy manager, a parser, a storage backend, and error handling logic. The proxy manager is responsible for selecting which IP to use for each request, tracking which IPs have been recently used or have failed, and managing sticky sessions when needed. Many teams use open-source frameworks like Scrapy (Python) or Crawlee (Node.js) that have built-in proxy rotation support through middleware and plugins.

Request headers are as important as proxy configuration for avoiding detection. A browser visiting a website sends dozens of headers: User-Agent, Accept, Accept-Language, Accept-Encoding, Referer, Sec-Fetch headers, and more. A naive scraper sending requests with only a URL and no headers, or with an obviously non-browser User-Agent like Python-requests/2.28.0, will be identified and blocked regardless of proxy rotation. Your scraper should send realistic browser headers that match the User-Agent string being used. Rotating through several different User-Agent strings (representing different browser versions and operating systems) further reduces fingerprinting.

Request timing is the third pillar of detection avoidance. A real human browsing a website takes variable amounts of time between page loads — reading content, scrolling, considering options. A bot making requests at exactly 2-second intervals, or at maximum speed, is immediately identifiable. Implement random delays between requests drawn from a realistic distribution (e.g., uniform random between 2–8 seconds, occasionally longer). Add additional thinking time after pages with high information density. Mirror the timing patterns of human browsing rather than optimising purely for speed — the additional time cost is typically small compared to the cost of proxy bandwidth wasted on blocked requests.

Web scraping occupies a complex legal landscape that varies by jurisdiction and the specific data being collected. In Hong Kong, the collection of publicly accessible web data for legitimate business purposes is generally lawful. The Personal Data (Privacy) Ordinance (PDPO) is the key legislation to consider: if you're scraping personal data — names, email addresses, to Spot and Avoid Attacks on Your Phone">Your Phone Number">phone numbers, or any information that identifies an individual — you must comply with PDPO requirements regarding collection purpose, use limitation, and data retention. Collecting publicly posted business information or pricing data that doesn't identify individuals is generally outside PDPO scope.

Website Terms of Service (ToS) often prohibit automated scraping. While violating a website's ToS is typically a civil matter between you and the website operator rather than a criminal offense, it can result in your access being terminated and potentially civil legal action. The legal status of ToS-prohibited scraping has been contested in courts internationally — US cases including hiQ Labs v. LinkedIn have generally found that scraping publicly accessible data is protected, but this is not universally settled law. Consulting a lawyer familiar with both the jurisdiction of your operations and the jurisdiction of the websites you're scraping is advisable for commercial operations at scale.

Ethical considerations extend beyond legal compliance. Even when scraping is lawful, aggressive scraping can degrade a website's performance for legitimate users by consuming server resources. Best practices include respecting robots.txt directives (the file at example.com/robots.txt that specifies which pages crawlers may access), rate-limiting your requests to a level that doesn't impact site performance, scraping during off-peak hours when possible, and reaching out to websites for data access partnerships or official APIs when available. Many companies are willing to provide structured data access to legitimate business users who approach them directly.

For web scraping use cases, proxy provider selection criteria differ somewhat from general proxy needs. The most important factors are pool size (larger pools support more concurrent scrapers and reduce IP reuse frequency), geographic coverage (targeting specific countries or cities requires genuine IPs in those locations), API quality (good documentation, reliable performance, and programmatic session management are essential for production systems), and pricing model alignment with your usage pattern.

Several proxy providers have developed products specifically for the scraping use case that go beyond raw proxy provision. Services like Bright Data's Web Unlocker and Smartproxy's Site Unblocker are managed scraping APIs that handle proxy rotation, CAPTCHA solving, browser fingerprint management, and JavaScript rendering automatically — you send a URL, they return the rendered HTML. These managed services cost more per request than raw proxies but reduce engineering complexity significantly for teams that don't want to build and maintain the full scraping stack themselves.

For teams building in-house scraping infrastructure, Bright Data, Oxylabs, Smartproxy, and IPRoyal are the most established providers with proven track records for scraping workloads. Evaluate them by running a trial on your actual target websites — success rates vary by target, and no provider can guarantee results without testing against your specific targets. Look for providers that offer pay-as-you-go pricing for initial testing (to evaluate without committing to a large subscription) and that have active technical support for integration questions.